looking for help. How to setup zed 2i in Unreal engine 5 for connecting live camera to virtual camera. I am not a tech guy. pls explain in step by step manner. Thanks in adavance

Hi,

First you have to build our Live link sample code (available at : https://github.com/stereolabs/zed-livelink-plugin).

The Readme explains how to build and run this code. You can also look at our documentation : https://www.stereolabs.com/docs/livelink/ or the official UE documentation about that : https://docs.unrealengine.com/5.0/en-US/using-live-link-data-in-unreal-engine/.

I made a new version, for virtual production application. It only sends the camera tracking data whereas the other version were made for avataring/skeleton tracking applications.

You can find it on this other branch : https://github.com/stereolabs/zed-livelink-plugin/tree/virtual-prod-version

As you can see, there is an already built version in “Release/win64/” (or linux/ if you are on ubuntu).

You can directly use this executable but you won’t be able to modify the camera parameters.

To do so, you need to modify the source code of the sample and build it yourself by following the guide available in the Github repository.

For the Unreal part, you should directly look at their documentation to see how to plug this data on a virtual camera : https://docs.unrealengine.com/5.0/en-US/using-live-link-data-in-unreal-engine/.

If you have any question, do not hesitate to ask.

Best regards,

Benjamin Vallon

Stereolabs Support

initially it seems to be very confusing, but I got it. Thanks for your support. Now its working in UE5 also. I have one more question to ask. Is it possible to do motion capture with this Zed 2i?. If so what is the process in UE5?.

initially it seems to be very confusing, but I got it. Thanks for your support. Now its working in UE5 also. I have one more question to ask. Is it possible to do motion capture with this Zed 2i?. If so what is the process in UE5?.

[Discourse post]

Hi,

You can indeed animate 3D models based on your movement using a live link sample (the version available on the main branch of the github repo).

You have all the informations about that on our website (https://www.stereolabs.com/docs/livelink/).

Note that you have to build the sample yourself (not like the one I shared yesterday). The step by step guide is available in the documentation page.

Best,

Benjamin Vallon

Stereolabs Support

In the document you refer, any highlighted hyper link leads to home page only. Why don’t you make a video tutorial for each option in the zen 2i. I think that will be very helpful. Even yesterday problem, I followed Ramiros YouTube video.

Now a days virtual production and mocap trend goin on. You can be best alternate for retracker, intel real sense, and vive trackers. Pls help.

hi, I don’t see any built version on your github, can you provide a link please ?

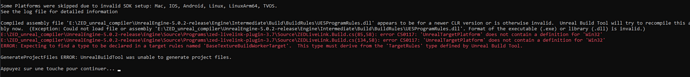

I have this error when i try to compile it

hey, I got the same error. Did you find a solution?

edit: I got it working in some level with 4.27, so maybe the error comes with UE5.02

Hi,

I pushed a fix for that error in the main branch of the repo.

All you have to do is removing the else if (Target.Platform == UnrealTargetPlatform.Win32)

in the Build.cs as it is no longer supported for UE5.

Best,

Benjamin Vallon

Open file like Admin