Camera working and all ZED modules. Spent 3 days with ChatGPT 5.4 - extended thinking, on this problem, but getting nowhere, stumbling from one mud-pool to another. The user walks with a ZED2i (2.1mm lens, 4 if needed) camera in front, at hip height, so first 1.5 m path ahead cannot be read. Idea is to fuse frames (if needed) to measure and store an accurate roughness of the path. It will instruct the motor to lift a foot up higher, if a 10-15 mm obstruction could cause the user to stumble and fall. We tried everything, but are not even getting a proper floor in static indoor conditions, that even detects a thick plank on the floor… Any help to point us in the right direction would be immensely appreciated, as I am loosing faith ChatGPT can help… Have programmed in the past, but no experience with Ubuntu… but understand it when reading it. Thanks for any help, Jules.

Before I forget… The ZED2i is plugged in a Nvidia Orin NX 16Gb. The PY program is generated by ChatGPT5.4 plus extended thinking on a separate Ubuntu laptop 22.04…

Hi @Jules

I’m sorry for your bad experience with our device.

Can you please add pictures and/or drawings that describe your project, your setup, and your goal?

They will allow us to better understand the operational conditions and help you configure the camera to obtain the best possible performance.

It would also be useful if you could share the source code of the Python application.

Hi Walter Myzhar,

Thank you for responding so quickly. I am really pleased that you have taken it upon you to look at my case, as I have seen many of your answers elsewhere; always concise, efficient and the best in my view.

By the way, I did not have a bad experience with the ZED2i at all; it works perfectly and is in my view the very best 3D camera with support software on the market, but it was the ChatGPT making the PY programs, that just did not seem to get anywhere…

I have written the explanation in MS Word (Hi Walter Myzhar.doc), as I normally like to print certain topics out, and read them in ‘comfort’, hope this is OK.

Hi Walter Myzhar.docx (18.1 KB)

zed_floor_sanity_test_v23.py (18.0 KB)

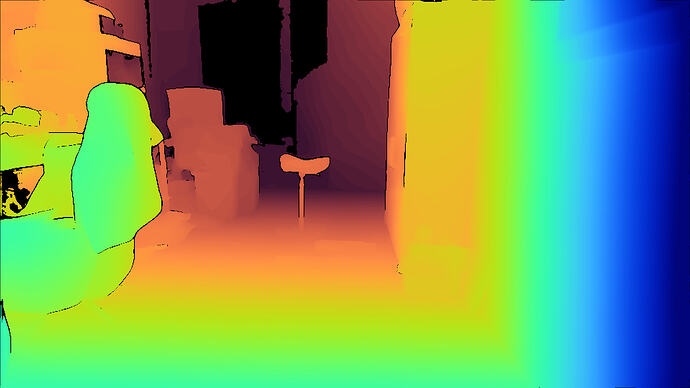

At first sight, these pictures show exposure issues that could be mitigated with a few tips.

If the camera is not pointing forward horizontally, but you add a little tilt to focus on the floor obstacles, you can reduce the noise generated by the sunlight or the artificial lights.

You can also use the ROI (Region of Interest) VIDEO_SETTING to select what area of the image to use to tune the camera exposure.

This can allow you to focus on the floor, ignoring the not relevant zones.

Examples on GitHub:

Hi Myzhar,

In answer to your reply; the camera is already pointing down (20-30 deg.). ChatGPT and I are aware the we can set the ROI and have done that in other trials, without major differences, maybe faster processing speed. OR would that change the level of details ?

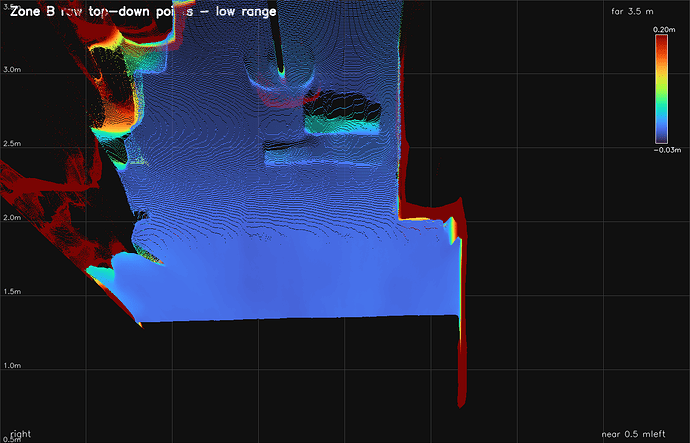

I am still wondering about the amount of detail that it can potentially pick up from the floor-plane, as shown in Zone_B_raw_topdown_points_low-range.png (2nd picture from top). Are the few details it shows, be the maximum possible, or what could be adjusted to improve the detail level ?

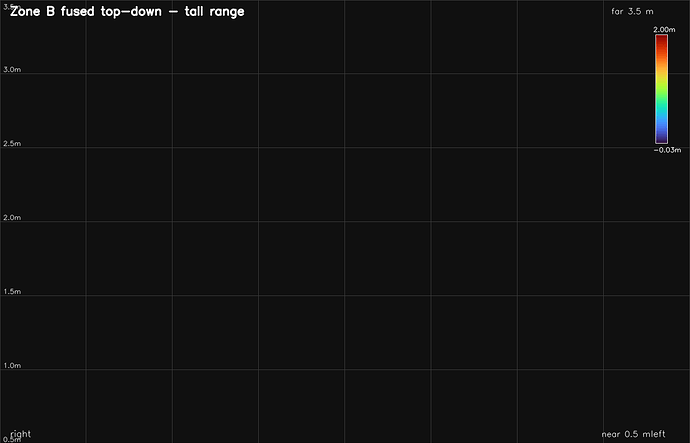

2nd is; that somehow, nothing above 0.5 m is picked up… (Zone_B_fused_topdown -tall range - 5th picture) any idea why ?

Thanks and kind regards,

Jules

You probably set the Region of Interest for Depth processing, not the Region of Interest for Automatic Exposure and Gain processing. Please verify it. If you share your code, I can check.

The theoretical depth accuracy depends on the distance from the object (see the datasheet of the camera), but the quality of the image can affect it.

Hi Myzhar and Walter Lucetti,

I got the same answer from both of you, so addressed both of you.

Indeed we have ONLY set the ROI for Depth and not Exposure and Gain… I will change that !

Myzhar mentioned in his last email that accuracy is about 1% for the ZED2i for depth detail. We have adjusted it so that we have a sliding scale of confidence and detail as we get closer.

It is not useful as yet to share the code, because we are still in the pseudocode stage for quick detecting errors by ChatGPT and constantly improving the code. I will share it all in the end for the general public, because you and Walter have helped me also. Many many thanks again for that

Kind regards,

Jules

Since then I found out that ChatGPT was doing his own floor-plane fitting, it wanted to do its own fitting and security and removing unsecure data… I have now restarted the code with ONLY Zed routines… My oh my… ChatGPT is not half as smart as I thought, only regurgitating what I write, but can find info very quickly. I have no fear anymore that it will take over the world… (never had) So for the moment, I am trying new ways to accomplish what I want. Thank you for your answers which really did tell me things to improve…

I will be back.

Kind regards, Jules

PS slight delay in my answers, as I did not know how to send the reply…

We are indeed the same person ![]()

AI agents are good, and they help you write code quickly, but you must always check and validate all the replies they provide because it’s not rare that they provide wrong code.

Hi Myzhar,

I do have a problem you can hopefully answer…

What would be the best sequence of code-routines to build a world_map, which is just a 4 meter wide x 0.5 -16 m long corridor the user walks in, divided in bands at 0.5-2, 2-3, 3-4, 4-8 & 8-16 m. of different size cells (2,3,4,8,16 cm cell-size)

ONCE AT START:

Initialize camera and set up bands with cells

EVERY FRAME:

-Fit plane (before ROI ?; because more points available for fitting accuracy?)

-Apply ROI for depth and for exposure

-Determine heights of ONLY cells, not all points (can that be done ?)

-Transpose cells to world_map and use for foot-lifting at objects.

If you agree with this set up I will suggest that to CODEX

Is there any specific routine that is good at detecting height differences above the fitted plane ?

Thanks and kind regards, Jules.

Please refer to this section of the documentation for best practices: