Hi There,

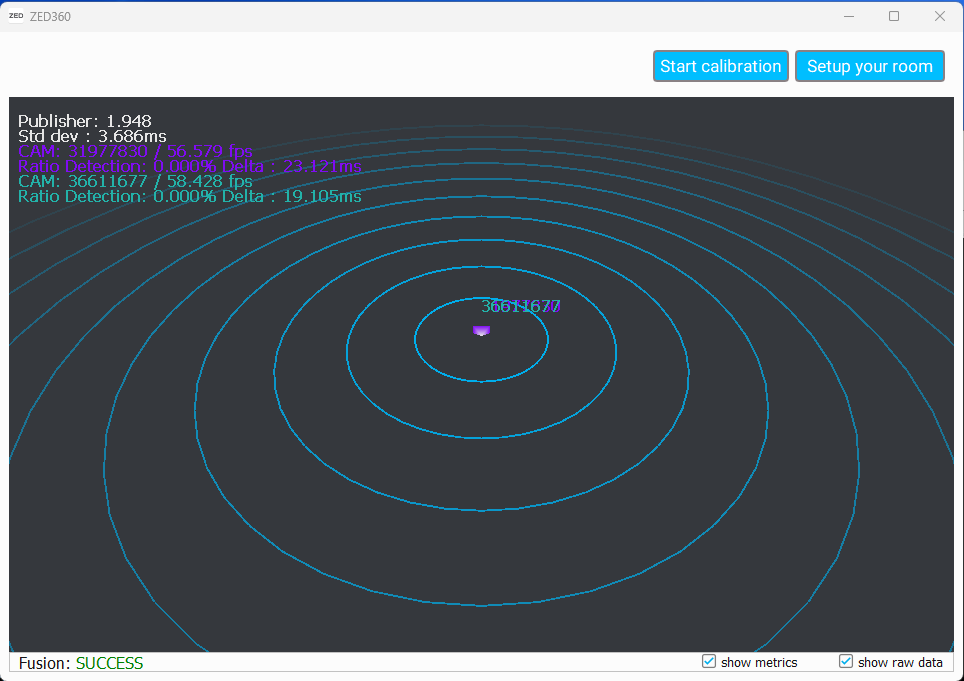

No luck with 360 fusion calibration.

I have written a sender app for 2x jetsons (with ZED2i), but in the ZED360 app both streamed cameras endup at the same location, when clicked Start Calibration nothing happens, no detected bodies and cameras are placed at the same point, whereas in the real world they’re 3m apart.

Sender and PC receiver using SDK 4.0.7

Here is the sender app snippet:

init_parameters.camera_resolution = zed_config.resolution;

init_parameters.camera_fps= zed_config.fps;

init_parameters.sdk_verbose = zed_config.debug_output;

// Open the camera

auto returned_state = zed.open(init_parameters);

if (returned_state != ERROR_CODE::SUCCESS) {

std::cout << "Open camera error: " << returned_state << ", exiting program. " << std::endl;

exit(EXIT_FAILURE);

}

int port = zed_config.cam_port + 100;

std::cout << "Publishing port: " << port << std::endl;

CommunicationParameters configuration;

configuration.setForLocalNetwork(port);

returned_state = zed.startPublishing(configuration);

if (returned_state != ERROR_CODE::SUCCESS) {

std::cout << "Publishing initialization error: " << returned_state << std::endl;

zed.close();

return EXIT_FAILURE;

}

else

{

std::cout << "Started publishing... " << returned_state << std::endl;

}

RuntimeParameters runtime_prameters;

while(!isClosing)

{

returned_state = zed.grab(runtime_prameters);

if (returned_state != ERROR_CODE::SUCCESS) {

isClosing = true;

}

}

What am I doing wrong here?