Hey,

thanks for the new geotracking module, I think it could be very useful.

However, I tried to integrate it into one of my pipelines and was confused about the results.

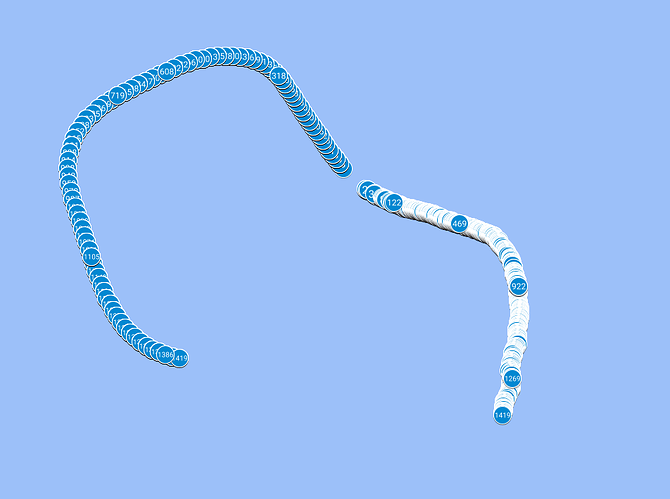

In particular, I loaded GNSS data that I captured as part of a data acquisition mission. I’m taking the GNSS datum/location closest to the corresponding video frame that is currently loaded. I put the lat/long/alt into the fusion algorithm and save it as a KML file. Similarly, I save the “raw” GNSS data as a KML file. The attached picture shows the result when I load these KML files into MyMaps from Google Maps. There is a different starting position and the trajectory is different, too. Am I overseeing something? (the right trajectory is the fused one, the left the raw one)

Would be really nice if you could help me point to my confusion. You can also find the adapted geotracking.py attached.

Thanks!

geotracking.py (15.6 KB)

Hello @Ben93kie,

Thank you for your message. We appreciate your feedback as it helps us in improving our product. Based on your description, it appears that your issues can be attributed to the following factors:

-

Synchronization: To fuse GNSS data and camera data effectively, a synchronization step is necessary. In our SDK, we have incorporated a synchronizer that relies on timestamps. I noticed in your sample that you are providing GNSS data with second-level timestamps. Although GNSS data is typically provided at a frequency of 1Hz, second-level timestamps are not precise enough for our SDK, especially considering that cameras can run at a frequency of 100Hz. To ensure accurate synchronization, please provide timestamps with at least millisecond precision. I will make sure to include a warning in the SDK regarding this requirement. I will also ensure that this issue is addressed and fixed in the GNSS Python sample code.

Additionally, it seems that you are reconstructing the GNSS timestamp based on the current and previous timestamps. This approach may result in inaccurate GNSS timestamps. It is recommended to use the actual captured GNSS timestamps with millisecond precision. This will greatly improve the synchronization process.

-

Geotracking Initialization: In order to obtain a fused position between the camera and GNSS data, it is necessary to compute the transformation between the camera and GNSS reference frames. Based on the figure you shared, it seems that either this initialization step is not being performed or it is being executed incorrectly. This issue may be related to the synchronization problem mentioned earlier. In a future release, we plan to address this behavior by ensuring that a good initialization is obtained before providing the geoposition.

If none of the above suggestions resolve your issue with obtaining accurate geotracking positions, please provide us the SVO and GNSS data. This will enable us to investigate if there are any other underlying issues affecting your results.

Thank you once again for reaching out to us. If you have any further questions or concerns, please let us know.

Best regards,

Tanguy

Hi Tanguy,

thanks for your response!

Wrt your comments on synchronization:

I do have timestamps with milliseconds precision as far as I can tell. Therefore, I’m not sure what you mean when you refer to second-level timestamps.

Furthermore, the problem with taking the asbolute timestamp instead of the differences is that in a recorded svo the command

sl.get_current_timestamp()

yields the current time, not the time when recording the video. Is that correct or am I overseeing something?

Wrt your comments on initialization:

Please see the attached txt (SEARCHING_GNSS), I think this is what you referred to as initialization phase. Is there anything weird going on?

If you could take a look on my svo+gnss, that would be awesome. I uploaded it here. I think you just need pyubx2 to load the GNSS data. Find them here:

SEARCHING_GNSS.txt (8.3 KB)

Hello @Ben93kie,

Thanks for the data I reviewed it and I found several possible issue.

- There is a gap between GNSS and Camera timestamp. Leading to impossible synchronization between GNSS and Camera. You must record GNSS and SVO together, here a sample for doing it: zed-sdk/geotracking/recording at master · stereolabs/zed-sdk · GitHub.

For retrieving current timestamp from SVO you could use timestamp field in sl::Pose for example:

Retrieve timestamp from ZED

if zed.grab() == sl.ERROR_CODE.SUCCESS:

zed.get_position(odometry_pose, sl.REFERENCE_FRAME.WORLD)

timestamp_in_ms = odometry_pose.timestamp.get_milliseconds()

Retrieve timestamp from Fusion

if fusion.process() == sl.FUSION_ERROR_CODE.SUCCESS:

fused_tracking_state = fusion.get_position(camera_pose, sl.REFERENCE_FRAME.WORLD)

timestamp_in_ms = odometry_pose.timestamp.get_milliseconds()

-

It seems that you ingest constant covariance for GNSS data. Ingesting constant covariance will lead to bad result (we use it for fusing gnss data) … if this covariance is over or under estimate this will lead to bad fusion results.

-

It seems that the first frames of your SVO are over exposed. This will lead to bad tracking results that would cause bad initialization results:

-

Do not use seconds precision timestamp for gnss data. Instead use milliseconds precision. For example:

ts = sl.Timestamp()

ts.set_milliseconds(current_gnss_timestamp) # instead of set_seconds

If you have any further questions or concerns, please let us know.

Best regards,

Tanguy

1 Like

Hi Tanguy,

thanks for looking over the data.

To your points:

-

I noticed that I uploaded the wrong video (drei_repaired.svo) instead of the correct one (finally_repaired.svo), sorry! I reuploaded the correct video and also the video from which the first 10 seconds are cut to remove the overexposed part of the video. If you want to have a look again, please find it online.

-

For the particular sensor I’m using I don’t get any covariance measurements sadly. But, with other sensors I will definitely do that.

-

As mentioned earlier, I created a new svo with the first 10 seconds cropped. This does not change the offset observed in the plot as can be seen in the plot (upper cluster GNSS only, lower cluster fused, there are 10(!) meters in between the last measurement from GNSS only and the first measurement from the fused version).

- I changed it to milliseconds, but I thought that does not make a difference since the seconds still have values after the comma resulting in the same number, but not sure.

Lastly, two questions:

- Generally, I wonder which algorithm you use under the hood. Is it some kind of Kalman filter?

- Can I also use a version which only fuses IMU with GNSS without visual data since I feel that for water surface conditions, the visual data might not be beneficial at times.

Thanks!

I will record my data with the provided script and try to redo some of the experiments (I have to develop on Windows though, so it’s a bit weird to do this GNSS service thing (might use windows subsystem for linux, but I’d want to send the output somewhere else in Windows, making it a bit challenging))

Hello Ben93kie,

-

I recheck your data and it seems that there still exist some timestamp gap between your SVO and your GNSS data:

SVO first timestamp: Sunday, May 21, 2023 12:07:52.475 PM GMT+02:00

GNSS first timestamp: Sunday, May 21, 2023 10:03:19.390 AM GMT+02:00

(I do not know if it’s a GMT issue from your recorder but even if we don’t look at the hour … there is still 4 minutes of difference between SVO and GNSS data). For recording GNSS data you should always record timestamp as a number representing number of milliseconds since epoch).

Do you record SVO and GNSS together or with differents run ?

-

It’s really bad that you don’t have covariance data for it. Indeed our algorithm use covariance for fusing data so without it it will be hard to obtain good results.

-

Yes it’s more better with this modification

Regarding your last questions, I am not authorize to communicate this kind of information. Sorry !

Hi Tanguy,

thanks for rechecking:

- Yes, the GNSS I recorded was started earlier. But in the script, I took the closest GNSS measurement point to the svo at all times (I skip the first 4 minuts of GNSS). I do have the milliseconds measurement (it’s in ublox compatible GNSS file format), it’s just a different format within Python.

- All right, I’ll redo the experiments with another sensor that gives me covariance.

Thanks for your help! I might post some updates here soon if you don’t mind.

With regards to the fusion version without visual data:

I’m not sure if it’s the right place but maybe it would be nice to have a fusion version without visual data at some point (like an option or such)

Hi again,

I did some more tests. Unfortunately, I still only have a sensor without covariance data. However, to me it seems like there should be another error.

Fine a comparison of the two trajectories here:

It seems to me that there is a symmetry error somewhere. Both trajectories were captured at the same time using your capture script (but since it’s on Windows, I can’t use the service but use my own logic).

I believe it should be ok, if I mirror lat, but not sure.

Have you had any similar experience at some point?

Thanks!

Hello Ben93kie,

It seems that image wasn’t uploaded correctly. However your problems seems to be linked to a synchronization / initialization problem. Could you share your re-recorded data to be sure ?

Regards,

Tanguy