Hello  , is it possible to set custom depth images for the body tracking example, perhaps by emulating a ZED camera? I’d like to do so from an AR headset, which comes with all the necessary sensor intrinsics and extrinsics to set as well as a steady stream of depth/rgb/stereo data, if there is a way to set these parameters.

, is it possible to set custom depth images for the body tracking example, perhaps by emulating a ZED camera? I’d like to do so from an AR headset, which comes with all the necessary sensor intrinsics and extrinsics to set as well as a steady stream of depth/rgb/stereo data, if there is a way to set these parameters.

In this answer (Is Body tracking works on lying people? Or How can I rotate svo file? - #2 by Myzhar), the commenter mentions we can use a live stream. Is this only exclusive to ZED cameras? If the SDK does not allow for customization out of the box, would this be a major task?

Thanks so much!

1 Like

Hi @austinbhale

the ZED SDK can integrate with custom object detection to provide 3D tracking:

https://www.stereolabs.com/docs/object-detection/custom-od/

This works only on bounding boxes and not with human skeletons because it is not normally required to use a custom detector for skeletons.

Can you explain what is your requirement with more details?

Hi @Myzhar,

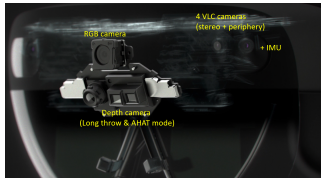

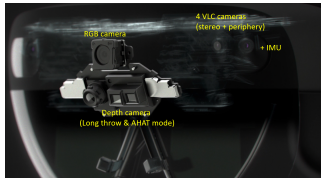

Thank you for responding so quickly! @austinbhale and I would like to feed in our own sensor streams from the HoloLens AR headset to perform body-tracking with the zed sdk, instead of using the ZED live stream or an svo file, perhaps using the set_from_stream function? In order to do so, we would need to know what information the z SDK needs and in what format. Using the headset’s API, we can access a 320 × 288 16-bit ir and depth stream as well as four visible-light tracking cameras (VLC): Grayscale cameras (640 × 480 8-bit). They are positioned like this  as well as a 2272x1278 RGB stream, is there any way we could adapt these streams to work with the SDK? Any advice how to best go about this? We are more than happy to provide more details please don’t hesitate to reach out if you have more questions!

as well as a 2272x1278 RGB stream, is there any way we could adapt these streams to work with the SDK? Any advice how to best go about this? We are more than happy to provide more details please don’t hesitate to reach out if you have more questions!

Thank you so much for taking the time!

Hi @mrcfschr

I’m sorry but the ZED SDK accepts inputs only generated by ZED Cameras (live, SVO, network stream).

, is it possible to set custom depth images for the body tracking example, perhaps by emulating a ZED camera? I’d like to do so from an AR headset, which comes with all the necessary sensor intrinsics and extrinsics to set as well as a steady stream of depth/rgb/stereo data, if there is a way to set these parameters.

, is it possible to set custom depth images for the body tracking example, perhaps by emulating a ZED camera? I’d like to do so from an AR headset, which comes with all the necessary sensor intrinsics and extrinsics to set as well as a steady stream of depth/rgb/stereo data, if there is a way to set these parameters. as well as a

as well as a